We all know how powerful SSIS can be when handling complex data integration projects. Yet even seasoned developers sometimes overlook specific error codes that quietly derail a package. One of those sneaky culprits is SSIS 469, which signals a data flow hiccup that often hides in plain sight. What really triggers this error, and how can you spot it before it stops your ETL pipeline?

It turns out understanding the exact conditions behind SSIS 469 can save you hours of debugging. By digging into component configurations, data types, and runtime memory clues, you can pinpoint the root issue fast. Equipped with that knowledge, you’ll avoid painful reruns and deliver clean, reliable data loads every time.

Understanding SSIS 469

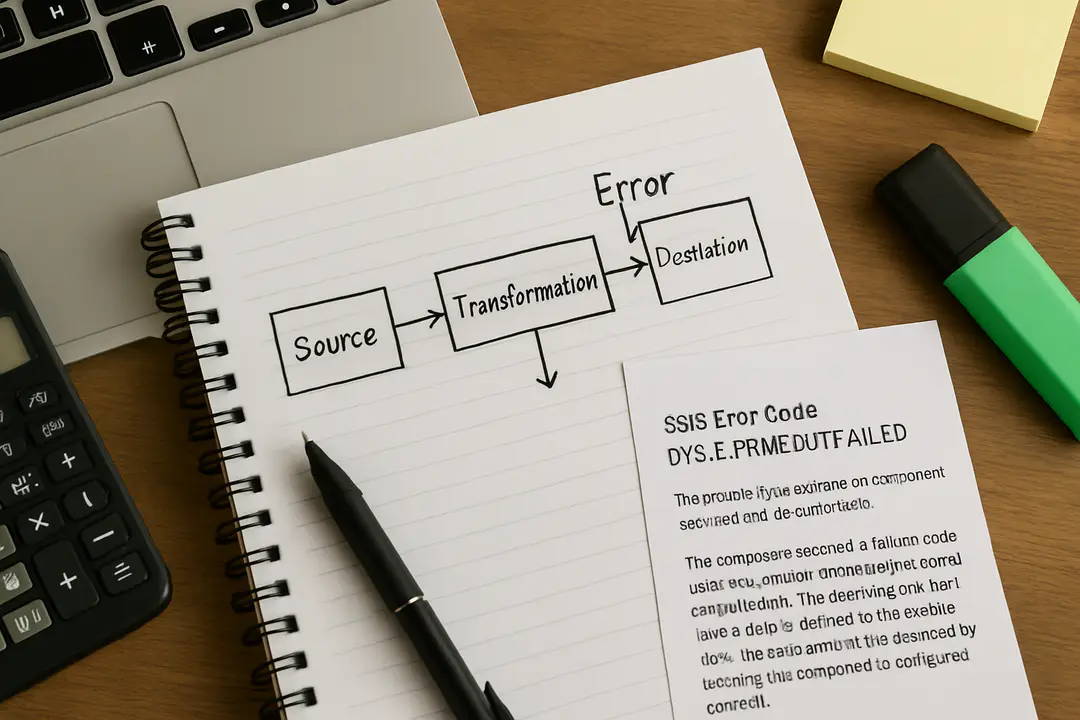

SSIS error 469 typically pops up in data flow tasks when a component cannot process incoming rows as expected. You might see a message like “Data conversion failed” or “Buffer manager spilled to disk,” though the detail varies by task type. The key point is that the engine flagged a row or column it can’t push through the pipeline.

Common scenarios include mismatched column lengths, overflow on numeric types, or even null values in non-nullable columns. Since SSIS buffers rows before writing them, a mismatch can overflow memory or force disk spooling, which triggers code 469. You might miss it if logging is only set at the control flow level.

Practical tip: enable data viewers on suspect paths to catch the first offending row. Even a quick preview can reveal a string too long or an unexpected null. That simple step often spots the culprit in seconds.

Common Root Causes

Before launching into deep troubleshooting, list out likely suspects around your data flow. Start with the source component: is it correctly mapping columns? Are you reading from a flat file, database table, or external API? Each source has quirks. For example, flat files may pad shorter rows, and APIs can change schemas without notice.

Next, inspect data type mappings. A smallint in SQL Server won’t hold values above 32,767. Casting that to a DT_I2 in SSIS triggers 469 if you exceed the limit. Similarly, date formats or Unicode issues often slip past basic checks. For secure pipeline design, brush up on data encryption best practices that also discuss proper type handling when protecting PII.

Memory pressure can also cause this error. If multiple large columns feed into transformation components, SSIS may spill buffers to disk. While that might degrade performance, it also risks partial loads or component failure. Balancing batch sizes and buffer temp resources is crucial.

Quick tip: run your package with 1-2 rows per batch during testing. This isolates type or length issues quickly without overwhelming memory.

Troubleshooting Steps

- Enable Detailed Logging: Turn on pipeline logging for BufferSizeTuning and ComponentKey to see which part sent the fault.

- Use Data Viewers: Attach them to each path. Preview rows to spot mismatches before they break the flow.

- Check Data Conversions: Open each conversion component. Confirm length, precision, and scale match source metadata.

- Monitor Memory Settings: In Package Properties, adjust DefaultBufferMaxRows and DefaultBufferSize. Smaller buffers can reduce spills.

- Isolate Components: Disable downstream tasks. Run the source and transform alone to confirm where the error originates.

- Inspect Error Outputs: Many components let you redirect bad rows. Capture those to a flat file for offline review.

Following these steps in order narrows the search fast. You’ll often find it’s a single mismatched column or tiny overflow.

Performance Optimization Tips

Fixing SSIS 469 is one side of the coin. You also want your package to run smoothly. Here are some proven optimizations:

- Adjust Buffer Settings: Increase DefaultBufferSize to use more memory, but don’t exceed 10MB per buffer.

- Minimize Transformations: Push conversions to the source query if possible. Let the database engine handle heavy lifting.

- Use Fast Load Options: In OLE DB destinations, enable ’Table Lock’ and set a suitable ’Rows per Batch.’

- Filter Early: Apply WHERE clauses or conditional splits as soon as possible to reduce row counts.

- Disable Unused Columns: Only read columns you need. This lowers buffer footprint and speeds I/O.

These small tweaks often shave minutes off run times and prevent buffer-related errors.

Logging and Monitoring

Once your package runs smoothly, keep an eye on performance and errors in production. Consistent tracking helps you catch new issues quickly. SSIS Catalog logging captures execution durations, row counts, and warnings by default. Review those logs weekly to spot trends.

If you notice spikes in buffer spills or execution time, drill down into individual tasks. A data flow that suddenly grows indicates a source change or unexpected data volume. You might need to adjust buffer settings, add caches, or rewrite a query.

For deeper hardware insights, you can run your SSIS server through performance benchmarks like the ones in detailed performance logs. Those tests highlight I/O bottlenecks and memory throughput that directly affect ETL reliability.

Tip: set up alerts for execution failures. A simple email task on failure ensures you respond before a downstream process breaks.

Preventing Future Errors

After patching today’s issue, look at broader steps to avoid repeats. Start with strong package validation. Use Precedence Constraints to skip or redirect rows that don’t meet criteria. That way, edge cases don’t break the entire flow.

Maintain clear documentation of column mappings and transformation logic. When someone updates a source system, a quick glance at your docs reveals what needs a change. Pair that with version control for your .dtsx files.

Invest in ongoing skills with structured training modules. Consistent practice with new SSIS features—like improved buffer tuning and advanced logging—keeps your pipelines resilient.

Finally, automate package validation in your CI/CD pipeline. A simple bind-and-run step on each commit catches metadata drift and flags issues before they hit production.

Conclusion

SSIS 469 errors can feel cryptic at first, but they’re usually a sign of deeper data or buffer issues. By understanding the root causes, following systematic troubleshooting steps, and applying performance optimizations, you’ll get past the error quickly. Robust logging and proactive monitoring keep your ETL reliable over time. Finally, clear documentation and regular training cement those gains and stop 469 from sneaking back in. Armed with these practices, you’ll deliver smooth, error-free data flows that power your critical business processes without surprises.